Este artigo foi escrito em co-autoria por Iakovina Kindylidi, International Adviser na Vieira de Almeida, e por Tiago Sérgio Cabral, Associado na Vieira de Almeida.

On April 21st, the European Commission (“EC”) presented its long awaited Proposal for a Regulation on a European Approach for Artificial Intelligence proposing a single set of rules to regulate Artificial Intelligence (“AI”) in the European Union (“Proposal for an AI Regulation” or “Proposal”). The Proposal came four years after the European Parliament called upon the EC to frame a legislative proposal for set of civil law rules on robotics and artificial intelligence.

The Proposal marks the first comprehensive regulatory initiative in the content of AI aiming at increasing the development and adoption of safe AI while fostering the fundamental rights of EU citizens and establishing the EU as the world’s standard-setter for AI regulation, in line with what happened with data protection and the GDPR.

Scope of application

The Proposal has a broad scope of application, incorporating and establishing obligations for most players in the AI supply-chain including:

Moreover, the territorial scope of the Proposal extends to providers and users of AI systems that are not established in the EU, when the AI system is to be placed or put into service in the European Market or the output produced by the system will be used in the EU (Article 2).

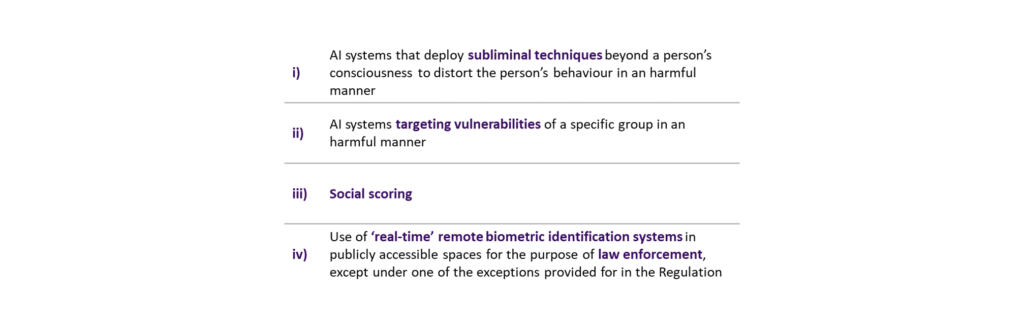

Forbidden AI uses

Under Article 5 of the Proposal, a number of uses of AI is strictly forbidden in the EU. Specific exceptions are identified in relation to point (iv) below, such as to search for potential victims of a crime, to prevent imminent threats, as well as to find people accused of crimes punishable by a custodial sentence or a detention order for a maximum period of at least three years.

High-risk AI

As stated in the beginning, the Proposal does not wish to restrict the design and adoption of AI applications. Therefore, the majority of rules introduced refers only to high-risk AI (Articles 6-51). In general, for an AI system to be used as high-risk the conditions identified in Article 6 of the Proposal should be fulfilled cumulatively.

To facilitate the identification of high-risk AI, in Annex III contains a numerus clausus list of high-risk uses of the technology. The need to amend this list will be assessed annually by the EC and it may be expanded via delegated acts pursuant to the criteria of Article 7 of the Proposal.

Some of the uses considered high-risk AI are:

It should be noted that although the approach of identifying the high-risk AI cases increases transparency and some legal certainty for stakeholders (albeit not complete as they may be caught in a false sense of security due to not being in the list, forego the adequate due diligence and then be surprised by a revision and inclusion), at the same time, it may be lacking flexibility. The success of this method and whether it will survive trialogue negotiations is still to be assessed.

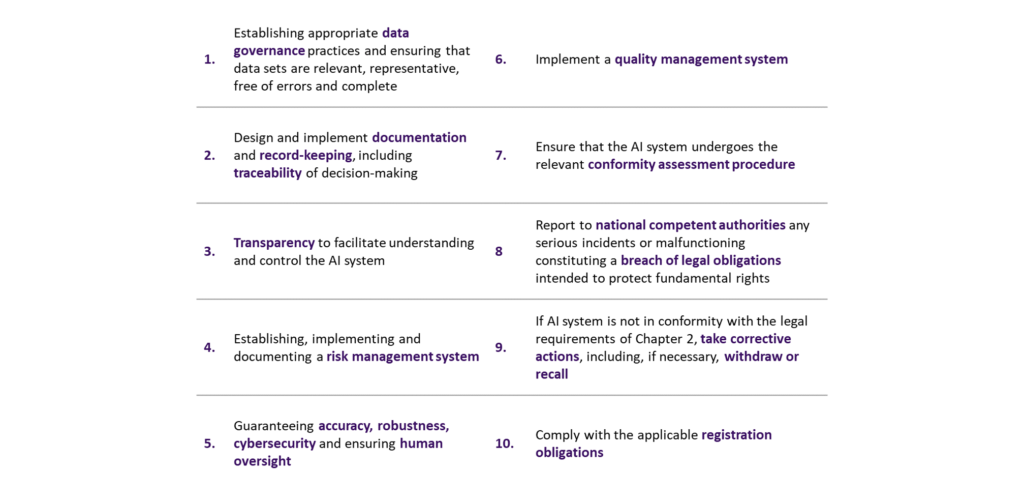

Obligations for high-risk AI

All entities involved in the AI supply chain are required to comply with certain obligations. Nonetheless, AI providers are particularly burdened by the rules introduced in Chapter 2 of the Proposal. Indicatively, some of the key requirements are:

Moreover, users are required to a) to monitor high-risk AI for serious incidents or malfunctions or signs of presenting a risk at a national level; b) store logs when they are under its control, andc) when exercising control over input data, ensuring that it is relevant for the AI system’s purpose. Notwithstanding, it should be noted that under the Proposal any of the stakeholders involved in the AI value chain may be considered as a provider and, therefore, it will be required to comply with the corresponding obligations, when putting a system in the market or into service under their name or trademark, modifying its purpose or making substantial adaptions to an AI system. The latter are of importance, in particular, to AI users. Fulfilling one of the abovementioned criteria may be, in fact, common for certain types of users of AI systems. Therefore, it is important to assess this matter before choosing and deploying a certain solution.

Specific transparency obligations for certain AI systems

Without prejudice to the obligations identified above, under the risk-based approach. specific transparency obligations are posed in certain types of AI, irrespective of being considered high-risk AI or not, due to their interaction with humans. In particular:

Penalties

Although Members States may establish additional effective, proportionate, and dissuasive rules regarding penalties, the Commission has introduced mandatory fines for certain infringements. Penalties introduced by Member States cannot derogate from the uniformized rules below.

Supervisory Authority

Under the Proposal a new European body will be established: the European Artificial Intelligence Board (“EAIB”). The EAIB will be tasked to promote the coherent and uniform application of the Regulation, including amongst others through the development of standards and issuance of guidelines. Moreover, Member States should designate a national supervisory authority which will be responsible for the implementation and application of the Regulation as well as facilitating the communication with the EC and, finally, representing the Member State at the European Artificial Intelligence Board.

Conclusion

The Proposal for an AI Regulation is following a holistic approach aiming to establish strict rules for high-risk AI and heavy penalties for non-compliance while facilitating AI development through cooperation with supervisory authorities, creation of regulatory sandboxes as well as measures to reduce the regulatory burden of small-scale providers and users-start-ups.

Irrespective of some shortcomings, such as the lack of liability or consumer protection provisions, the unclear enforcement mechanism and the omission of addressing issues related to sustainability of AI at least as part of a voluntary labelling scheme, that may require further analysis or improvement, the Commission’s Proposal has undoubtedly merit and will become a point of reference for AI regulations outside of the EU.

Following its publication, the trialogue discussions will commence to reach a decision on the final text; a process that may take years.

1 comentário

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.