The digital footprint of young people is continuously growing, and as evidence of the negative effects of social media on youth mental health mounts, the debate over how to effectively protect minors in the digital environment has intensified in recent years. This topic has not escaped attention in the European Union either. Concerns about cyberbullying, depression, and anxiety have become particularly prominent. The research has shown “that problematic social media users also reported lower mental and social well-being and higher levels of substance use.”

Several regulatory initiatives now shape this discussion. The Digital Services Act, particularly Article 28, establishes ground rules for online intermediaries and platforms, while the Commission’s 2025 guidelines further elaborate measures aimed at improving child safety online. At the same time, in response to the increasing prevalence of online child sexual abuse material, the proposed “Chat Control” Regulation highlights the tension between protection and privacy. Not to mention, more restrictive proposals within the European Parliament advocate for an even more ambitious solution to mitigate the risks to children’s mental health abuse. In their report, the MEPs are considering banning social media use up until the age of 16 as the most effective means of protecting the well-being of minors.

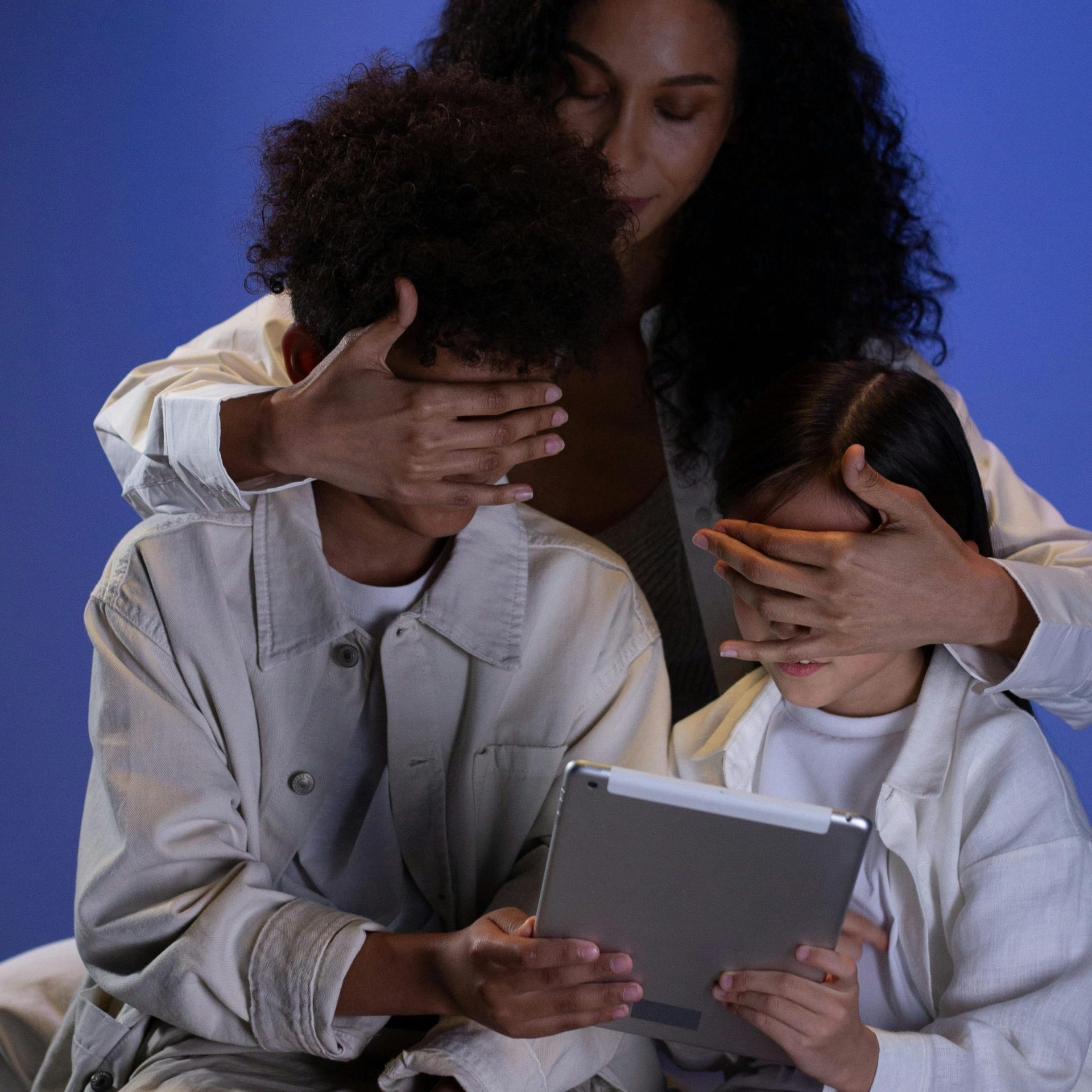

In an attempt to find a feasible solution to a child-friendly digital environment, this article aims to find answers to the dilemma of whether the EU should regulate children’s access to social media through a ban, or through safer design and stronger moderation.

The legal framework in the EU

The EU legal framework for this debate is built on a balance between competing fundamental rights. First and foremost an account has to be taken to Article 11 EU Charter of Fundamental Rights (CFREU), which protects freedom of expression and information and the pluralism of media. In this spirit, the EU cannot regulate online speech in a way that amounts to broad censorship or unjustified interference with access to information. On the other hand, when considering the child’s best interests, as provided for in Article 24 CFREU, the Union may be compelled to adopt targeted regulatory measures that limit certain aspects of online communication in order to ensure a safe digital environment for minors.

In the digital environment, it is essential to strike a balance, as measures aimed at protecting minors often affect other fundamental rights. In particular, mechanisms such as age verification or client side scanning raise significant concerns regarding potential infringements on the right to privacy.

As a matter of fact, none of these rights are absolute and may, under certain circumstances, be subject to limitations. However, any such serious interference must comply with the principles of legality, legitimacy, and proportionality, requiring in particular that the least restrictive means be employed.

The question then becomes how to effectively balance the right to freedom of expression with the rights of the child, so that both are proportionately safeguarded. A key concern is that a blanket ban on social media access for minors could unduly restrict their right to information, thereby limiting their opportunities for expression and participation. On the other hand, strict content moderation risks opening the door to overreach, potentially leading to disproportionate censorship and undermining the open nature of online discourse.

Measures implemented by the EU

In line with the principle “what applies offline, also applies online,” adopted by the Human Rights Council in 2012, the European Union has developed a legal framework intended to regulate online behaviour while safeguarding fundamental rights. One of the central instruments in this regard is the Digital Services Act (DSA), which establishes obligations for online intermediaries and platforms and aims to reduce illegal and harmful content. Particular attention regarding the protection of minors online is provided in its Art. 28, which emphasizes the need to “put in place appropriate and proportionate measures to ensure a high level of privacy, safety, and security of minors.” Moreover, the obligation to do annual impact assessments enhances the awareness of what risks their users face, so they can be effectively mitigated (Art. 34 and 35 DSA). The Commission’s 2025 guidelines further strengthen online safety of youth by basing all its recommendations on the following 3 principles: “children’s rights come first, safety by design, and understanding user needs.” In practice, this translates to platforms embedding safety and privacy measures into their services from the beginning instead of responding only after harm has happened.

Despite the development of an important child-safety framework under the DSA, significant gaps remain. Article 28 is drafted in a relatively broad scope, and the lack of detailed standards and uniform age-verification requirements may lead to inconsistent implementation across platforms. Another issue arises from the criteria for identifying illegal content, which are based on both EU law and national legislation. In this respect, platforms must navigate differing standards of freedom of expression across Member States, leading to legal uncertainty and inconsistent protection for minors throughout the Union. In addition, systemic risk assessments have been criticised for remaining too general and failing to translate concerns about minors into concrete changes in platform architecture.

Protection of minors online: Australia vs. China

As the protection of minors in the digital environment has become an increasingly prominent regulatory concern, it is very useful to examine how jurisdictions from different parts of the globe approach these challenges.

The debate has evolved since the 2005 Joint Declaration, which stated that online service providers should not be held liable for content they did not create. However, this approach has gradually shifted towards greater responsibilities for social media platforms. One notable example is Australia, which has adopted a highly interventionist regulatory model. By contrast, China has implemented a state-driven approach focused on strict content control and usage limitations, particularly targeting minors.

1. Australia

By the end of 2024, Australia passed a new and very restrictive law banning the use of social media by youth under 16 years of age. This decision has sparked significant debate as to whether such a restrictive approach constitutes an effective and proportionate means of protecting children online.

Some voices raised the concern of disproportionate infringements to the right of freedom of expression of minors and their ability to participate in a society. Moreover, questions have been raised regarding the practical effectiveness of the ban in enhancing online safety. Supporters, however, justify the legislation as a necessary response to growing concerns about the mental health of young users, pointing to a study conducted in 2025 that found 7 out of 10 children are exposed to harmful content online. The law imposes substantial financial penalties on 10 major platforms, including Instagram, TikTok, and YouTube, in cases of non-compliance.

Nevertheless, significant implementation challenges remain. Age-verification mechanisms, particularly those relying on facial recognition technologies, continue to face reliability concerns. Furthermore, the limited scope of the legislation, omitting platforms such as dating apps and online gaming platforms that supposedly incitied suicidal thoughts to children and engaged in “sensual” discussions with minors, raises questions about its overall effectiveness. Additional concerns relate to enforcement and circumvention, as evidenced by increased installations of VPN services and the creation of false accounts. Finally, data protection risks remain salient, especially in light of previous large-scale data breaches in which confidential personal information was compromised.

2. China

China represents a markedly different approach characterised by extensive state control over digital platforms and a strong emphasis on cognitive stimulation. The Chinese version of TikTok, known as Douyin, operates within a highly regulated environment, where content is subject to strict moderation and censorship in line with state policies.

While such a rule goes clearly against the freedom of expression, particular attention should be paid to the platform’s design features targeting minors. More importantly, after the implementation of China’s Law on the Protection of Minors in 2021, the platform has introduced a daily screen time for teenagers to 40 minutes. The platform’s design goes even further and restricts the access to the app between 22.00 until 6.00. In addition, the app prioritises educational and age appropriate content for youth and does not feature a “brain-rot” content.

This design-based approach aims to shape the digital environment itself, rather than restricting access altogether. Although its effectiveness, as well as its broader implications for fundamental rights, remains subject to ongoing debate, it demonstrates a different nuance of a child-friendly approach.

Recommendation for new EU measures

The dilemma between a total ban and moderated access reveals a fundamental question: Should we change the child to fit the digital environment, or change the digital environment to fit the child? Australia’s prohibitive model risks isolating minors and encouraging circumvention through VPNs. Conversely, the Chinese model demonstrates the benefits of educational content and time limits but at the cost of fundamental freedoms.

The European Union, bound by the CFREU, should adopt an approach that is consistent with the principle of proportionality and minimises interference with fundamental rights. In this context, a complete ban on social media access for minors appears overly restrictive and difficult to justify under EU law.

Instead of banning the whole platform, the EU should concentrate on safety-by-design approach. In particular, platforms should be required to restrict certain engagement-driven features for minors by default. These include so-called “dark patterns,” such as infinite scroll, “streaks,” and autoplay, which are designed to maximize user retention. Such measure could mirror the Chinese “healthier” model without the state censorship. Additionally, restricting the use of social media during “night hours” could prove beneficial for the quality of sleep. Regarding data privacy, the new EU Digital Identity Wallet may prove beneficial for keeping the users’ data safe. Moreover, substantial attention should be paid to educating the youth about the traps they can encounter online.